Numerical methods for solving linear least squares problems

- Published: June 1965

- Volume 7 , pages 206–216, ( 1965 )

Cite this article

- G. Golub 1

2466 Accesses

640 Citations

6 Altmetric

Explore all metrics

A common problem in a Computer Laboratory is that of finding linear least squares solutions. These problems arise in a variety of areas and in a variety of contexts. Linear least squares problems are particularly difficult to solve because they frequently involve large quantities of data, and they are ill-conditioned by their very nature. In this paper, we shall consider stable numerical methods for handling these problems. Our basic tool is a matrix decomposition based on orthogonal Householder transformations.

This is a preview of subscription content, log in via an institution to check access.

Access this article

Price includes VAT (Russian Federation)

Instant access to the full article PDF.

Rent this article via DeepDyve

Institutional subscriptions

Similar content being viewed by others

Solving large linear least squares problems with linear equality constraints

Iterative Solution Methods

Golub, G. H. , and R. S. Varga : Chebyshev Semi-Iterative Methods, Successive Over-Relaxation Iterative Methods, and Second Order Richardson Iterative Method. Numer. Math. 3 , 147–168 (1961).

Article Google Scholar

Householder, A. S. : Unitary Triangularization of a Nonsymmetric Matrix. J. Assoc. Comput. Mach. 5 , 339–342 (1958).

Google Scholar

Läuchli, P. : Jordan-Elimination und Ausgleichung nach kleinsten Quadraten. Numer. Math. 3 , 226–240 (1961).

Linnik, Y. : Method of Least Squares and Principles of the Theory of Observations. Translated from Russian by R. C. Elandt ., New York: Pergamon Press 1961.

McKeeman, W. M. : Crout with Equilibration and Iteration. Algorithm 135. Comm. Assoc. Comput. Mach. 5 , 553–555 (1962).

Osborne, E. E. : On Least Squares Solutions of Linear Equations. J. Assoc. Comput. Mach. 8 , 628–636 (1961).

Riley, J. D. : Solving Systems of Linear Equations with a Positive Definite, Symmetric, but Possibly Ill-Conditioned Matrix. Math. Tables Aids Comput. 9 , 96–101 (1956).

Waugh, F. V. , and P. S. Dwyer : Compact Computation of the Inverse of a Matrix. Ann. Math. Stat. 16 , 259–271 (1945).

Wilkinson, J. H. : Householders Method for the Solution of the Algebraic Eigenproblem. Comput. J. 3 , 23–27 (1960).

—: Error Analysis of Direct Methods of Matrix Inversion. J. Assoc. Comput. Mach. 8 , 281–330 (1961).

Download references

Author information

Authors and affiliations.

Computation Center, Stanford University, 94305, Stanford, California, USA

You can also search for this author in PubMed Google Scholar

Additional information

Reproduction in Whole or in Part is permitted for any Purpose of the United States government. This report was supported in part by Office of Naval Research Contract Nonr-225(37) (NR 044-11) at Stanford University.

Rights and permissions

Reprints and permissions

About this article

Golub, G. Numerical methods for solving linear least squares problems. Numer. Math. 7 , 206–216 (1965). https://doi.org/10.1007/BF01436075

Download citation

Received : 24 September 1964

Issue Date : June 1965

DOI : https://doi.org/10.1007/BF01436075

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Mathematical Method

- Computer Laboratory

- Matrix Decomposition

- Householder Transformation

- Find a journal

- Publish with us

- Track your research

IMAGES

VIDEO

COMMENTS

NUMERICALLY EFFICIENT METHODS FOR SOLVING LEAST SQUARES PROBLEMS 5 The 2-norm is the most convenient one for our purposes because it is associated with an inner product. Once we have an inner product de ned on a vector space, we can de ne both a norm and distance for the inner product space: De nition 3.2. Suppose that V is an inner product space.

6,672. PDF. This paper aims to present numerically stable and computationally efficient algorithms for computing the solution to Least Squares Problems. Computing the solution to Least Squares Problems is of great importance in a wide range of fields ranging from numerical linear algebra to econometrics and optimization.

Numerical Methods for Solving Linear Least Squares Problems 215. thecalculation from the beginning again if the method of orthogonalization is used. Let R1, cl correspond to the original data after ithas been reduced by orthogonal transformations and let A2, b2 correspond to the additional observa-tions. Then the up-dated least squares solution ...

An accessible text for the study of numerical methods for solving least squares problems remains an essential part of a scientific software foundation. This book has served this purpose well. Numerical analysts, statisticians, and engineers have developed techniques and nomenclature for the least squares problems of their own discipline.

Modern numerical methods for solving least squares problems are sur veyed in the two comprehensive monographs by Lawson and Hanson (1995) and Bjorck (1996). The latter contains a bibliography of 860 references, indicating the considerable research interest in these problems. Hansen

Numerical solution of linear least-squares problems is a key computational task in science and ... underlying matrices have full rank and are well-conditioned. However, there are few efficient and robust approaches to solving the linear least-squares problems in which the ... solving rank-deficient linear least-squares problems. Our proposed ...

This paper considers stable numerical methods for handling linear least squares problems that frequently involve large quantities of data, and they are ill-conditioned by their very nature. A common problem in a Computer Laboratory is that of finding linear least squares solutions. These problems arise in a variety of areas and in a variety of contexts. Linear least squares problems are ...

Numerical Methods for Least Squares Problems: Second Edition. Author(s): Åke Björck; Book Series. Advances in Design and Control; ... banded least squares, sparse problems, regularized least squares, partial least squares, Krylov subspace methods, preconditioners for least squares,

1.1. Introduction The linear least squares problem is a computational problem of primary importance, which originally arose from the need to fit a linear mathematical model to given observations. In order to reduce the influence of errors in the observations one would then like to use a greater number of measurements than the number of unknown parameters in the model. The resulting problem is ...

normal equations for these least-squares adjustment. problems. In particular, it is shown how a block-. orthogonal decomposition method can be used in conjunction. with a nested dissection scheme to produce an algorithm. for solving such problems which combines efficient data. management with numerical stability.

The total least squares (TLS) method is a well-known technique for solving an overdetermined linear system of equations Ax ≈ b, that is appropriate when both the coefficient matrix A and the right-hand side vector b are contaminated by some noise. For ill-posed TLS poblems, regularization techniques are necessary to stabilize the computed solution; otherwise, TLS produces a noise-dominant ...

Abstract : We have studied a number of computational problems in numerical linear algebra. Most of these problems arise in statistical computations. They include the following: (1) Application of the conjugate gradient method to nonorthogonal analysis of variance; (2) Use of orthogonalization procedures in geodetic problems; (3) Algorithms for computing sample variance; (4) Truncated Newton ...

It is often desired to solve a sequence of modified least squares problems. min x ‖ A x − b ‖ 2, A ∈ R m × n, 3.1.1. where in each step rows of data in ( A, b) are added, deleted, or both. This need arises, e.g., when data are arriving sequentially. In various time-series problems a window moving over the data is used; when a new ...

The numerical methods for linear least squares are important because linear regression models are among the most important types of model, both as formal statistical models and for exploration of data-sets. The majority of statistical computer packages contain facilities for regression analysis that make use of linear least squares computations.

The method of least squares was discovered by Gauss in 1795 and has since become the principal tool for reducing the influence of errors when fitting models to given observations. Today, applications of least squares arise in a great number of scientific areas, such as statistics, geodetics, signal processing, and control. In the last 20 years there has been a great increase in the capacity ...

The linear least square problem is to find a vector x of the size n × 1 which will minimize kAx − bk2. In the case when m = n and the matrix A is nonsingular we can get solution to this problem as. x = A−1b. However, when m > n (more equations than unknowns) the problem is called overdetermined. Opposite, when m < n (more unknowns than ...

A common problem in a Computer Laboratory is that of finding linear least squares solutions. These problems arise in a variety of areas and in a variety of contexts. Linear least squares problems are particularly difficult to solve because they frequently involve large quantities of data, and they are ill-conditioned by their very nature. In this paper, we shall consider stable numerical ...

In turn, that is why in spite of having to solve ill-conditioned linear systems such approximations are numerically computable with standard least squares methods [10], [11], [23]. We refer to [23, §4.1 and §4.2] for a precise statement on the convergence behavior in this setting.

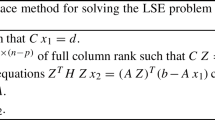

Mathematics. 1997. TLDR. This paper gives a new analysis of the weighting method for solving a least squares problem with linear equality constraints, based on the QR decomposition, that exhibits many features of the algorithm and suggests a natural criterion for chosing the weighted factor. Expand.

If you are going to solve a least squares problem of any magnitude, you need Numerical Methods for Least Squares Problems …' B. A. Finlayson, Applied Mechanics Review "A comprehensive and up-to-date treatment that includes many recent developments. In addition to basic methods, it covers methods for modified and generalized least squares ...

7.1. Introduction In this chapter we consider the iterative solution of large sparse least squares problems minx‖Ax−b‖2. We assume in the following, unless otherwise stated, that A has full column rank, so that the problem has a unique solution. In principle any iterative method for symmetric positive definite linear systems can be applied to the system of normal equations . The explicit ...

An accessible text for the study of numerical methods for solving least squares problems remains an essential part of a scientific software foundation. This book has served this purpose well. Numerical analysts, statisticians, and engineers have developed techniques and nomenclature for the least squares problems of their own discipline.

It is proved that this method converges to the unique solution of the linear least‐squares problem when its coefficient matrix is of full rank, with the number of rows being no less than the ...

Mathematical and Statistical Properties of Least Squares Solutions. 2. Basic Numerical Methods. 3. Modified Least Squares Problems. 4. Generalized Least Squares Problems. 5. Constrained Least Squares Problems.

The iteratively reweighted least squares (IRLS) approach is adopted to solve this problem, in which the least l p-norm approximation is decomposed into a series of weighted least squares (WLS) subproblems. A matrix-based conjugate gradient (CG) algorithm is presented to solve those WLS subproblems, which is very efficient due to the use of ...