Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- What Is a Case-Control Study? | Definition & Examples

What Is a Case-Control Study? | Definition & Examples

Published on February 4, 2023 by Tegan George . Revised on June 22, 2023.

A case-control study is an experimental design that compares a group of participants possessing a condition of interest to a very similar group lacking that condition. Here, the participants possessing the attribute of study, such as a disease, are called the “case,” and those without it are the “control.”

It’s important to remember that the case group is chosen because they already possess the attribute of interest. The point of the control group is to facilitate investigation, e.g., studying whether the case group systematically exhibits that attribute more than the control group does.

Table of contents

When to use a case-control study, examples of case-control studies, advantages and disadvantages of case-control studies, other interesting articles, frequently asked questions.

Case-control studies are a type of observational study often used in fields like medical research, environmental health, or epidemiology. While most observational studies are qualitative in nature, case-control studies can also be quantitative , and they often are in healthcare settings. Case-control studies can be used for both exploratory and explanatory research , and they are a good choice for studying research topics like disease exposure and health outcomes.

A case-control study may be a good fit for your research if it meets the following criteria.

- Data on exposure (e.g., to a chemical or a pesticide) are difficult to obtain or expensive.

- The disease associated with the exposure you’re studying has a long incubation period or is rare or under-studied (e.g., AIDS in the early 1980s).

- The population you are studying is difficult to contact for follow-up questions (e.g., asylum seekers).

Retrospective cohort studies use existing secondary research data, such as medical records or databases, to identify a group of people with a common exposure or risk factor and to observe their outcomes over time. Case-control studies conduct primary research , comparing a group of participants possessing a condition of interest to a very similar group lacking that condition in real time.

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

Case-control studies are common in fields like epidemiology, healthcare, and psychology.

You would then collect data on your participants’ exposure to contaminated drinking water, focusing on variables such as the source of said water and the duration of exposure, for both groups. You could then compare the two to determine if there is a relationship between drinking water contamination and the risk of developing a gastrointestinal illness. Example: Healthcare case-control study You are interested in the relationship between the dietary intake of a particular vitamin (e.g., vitamin D) and the risk of developing osteoporosis later in life. Here, the case group would be individuals who have been diagnosed with osteoporosis, while the control group would be individuals without osteoporosis.

You would then collect information on dietary intake of vitamin D for both the cases and controls and compare the two groups to determine if there is a relationship between vitamin D intake and the risk of developing osteoporosis. Example: Psychology case-control study You are studying the relationship between early-childhood stress and the likelihood of later developing post-traumatic stress disorder (PTSD). Here, the case group would be individuals who have been diagnosed with PTSD, while the control group would be individuals without PTSD.

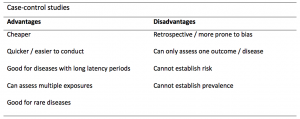

Case-control studies are a solid research method choice, but they come with distinct advantages and disadvantages.

Advantages of case-control studies

- Case-control studies are a great choice if you have any ethical considerations about your participants that could preclude you from using a traditional experimental design .

- Case-control studies are time efficient and fairly inexpensive to conduct because they require fewer subjects than other research methods .

- If there were multiple exposures leading to a single outcome, case-control studies can incorporate that. As such, they truly shine when used to study rare outcomes or outbreaks of a particular disease .

Disadvantages of case-control studies

- Case-control studies, similarly to observational studies, run a high risk of research biases . They are particularly susceptible to observer bias , recall bias , and interviewer bias.

- In the case of very rare exposures of the outcome studied, attempting to conduct a case-control study can be very time consuming and inefficient .

- Case-control studies in general have low internal validity and are not always credible.

Case-control studies by design focus on one singular outcome. This makes them very rigid and not generalizable , as no extrapolation can be made about other outcomes like risk recurrence or future exposure threat. This leads to less satisfying results than other methodological choices.

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Student’s t -distribution

- Normal distribution

- Null and Alternative Hypotheses

- Chi square tests

- Confidence interval

- Quartiles & Quantiles

- Cluster sampling

- Stratified sampling

- Data cleansing

- Reproducibility vs Replicability

- Peer review

- Prospective cohort study

Research bias

- Implicit bias

- Cognitive bias

- Placebo effect

- Hawthorne effect

- Hindsight bias

- Affect heuristic

- Social desirability bias

Prevent plagiarism. Run a free check.

A case-control study differs from a cohort study because cohort studies are more longitudinal in nature and do not necessarily require a control group .

While one may be added if the investigator so chooses, members of the cohort are primarily selected because of a shared characteristic among them. In particular, retrospective cohort studies are designed to follow a group of people with a common exposure or risk factor over time and observe their outcomes.

Case-control studies, in contrast, require both a case group and a control group, as suggested by their name, and usually are used to identify risk factors for a disease by comparing cases and controls.

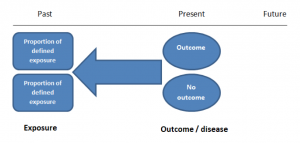

A case-control study differs from a cross-sectional study because case-control studies are naturally retrospective in nature, looking backward in time to identify exposures that may have occurred before the development of the disease.

On the other hand, cross-sectional studies collect data on a population at a single point in time. The goal here is to describe the characteristics of the population, such as their age, gender identity, or health status, and understand the distribution and relationships of these characteristics.

Cases and controls are selected for a case-control study based on their inherent characteristics. Participants already possessing the condition of interest form the “case,” while those without form the “control.”

Keep in mind that by definition the case group is chosen because they already possess the attribute of interest. The point of the control group is to facilitate investigation, e.g., studying whether the case group systematically exhibits that attribute more than the control group does.

The strength of the association between an exposure and a disease in a case-control study can be measured using a few different statistical measures , such as odds ratios (ORs) and relative risk (RR).

No, case-control studies cannot establish causality as a standalone measure.

As observational studies , they can suggest associations between an exposure and a disease, but they cannot prove without a doubt that the exposure causes the disease. In particular, issues arising from timing, research biases like recall bias , and the selection of variables lead to low internal validity and the inability to determine causality.

Sources in this article

We strongly encourage students to use sources in their work. You can cite our article (APA Style) or take a deep dive into the articles below.

George, T. (2023, June 22). What Is a Case-Control Study? | Definition & Examples. Scribbr. Retrieved June 24, 2024, from https://www.scribbr.com/methodology/case-control-study/

Schlesselman, J. J. (1982). Case-Control Studies: Design, Conduct, Analysis (Monographs in Epidemiology and Biostatistics, 2) (Illustrated). Oxford University Press.

Is this article helpful?

Tegan George

Other students also liked, what is an observational study | guide & examples, control groups and treatment groups | uses & examples, cross-sectional study | definition, uses & examples, what is your plagiarism score.

What Is A Case Control Study?

Julia Simkus

Editor at Simply Psychology

BA (Hons) Psychology, Princeton University

Julia Simkus is a graduate of Princeton University with a Bachelor of Arts in Psychology. She is currently studying for a Master's Degree in Counseling for Mental Health and Wellness in September 2023. Julia's research has been published in peer reviewed journals.

Learn about our Editorial Process

Saul Mcleod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul Mcleod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

A case-control study is a research method where two groups of people are compared – those with the condition (cases) and those without (controls). By looking at their past, researchers try to identify what factors might have contributed to the condition in the ‘case’ group.

Explanation

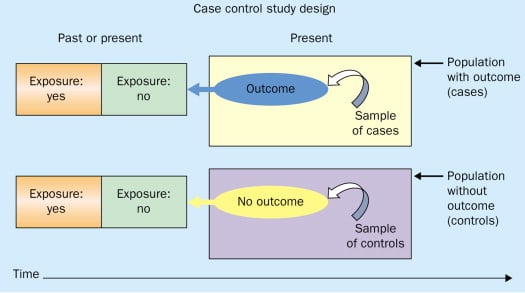

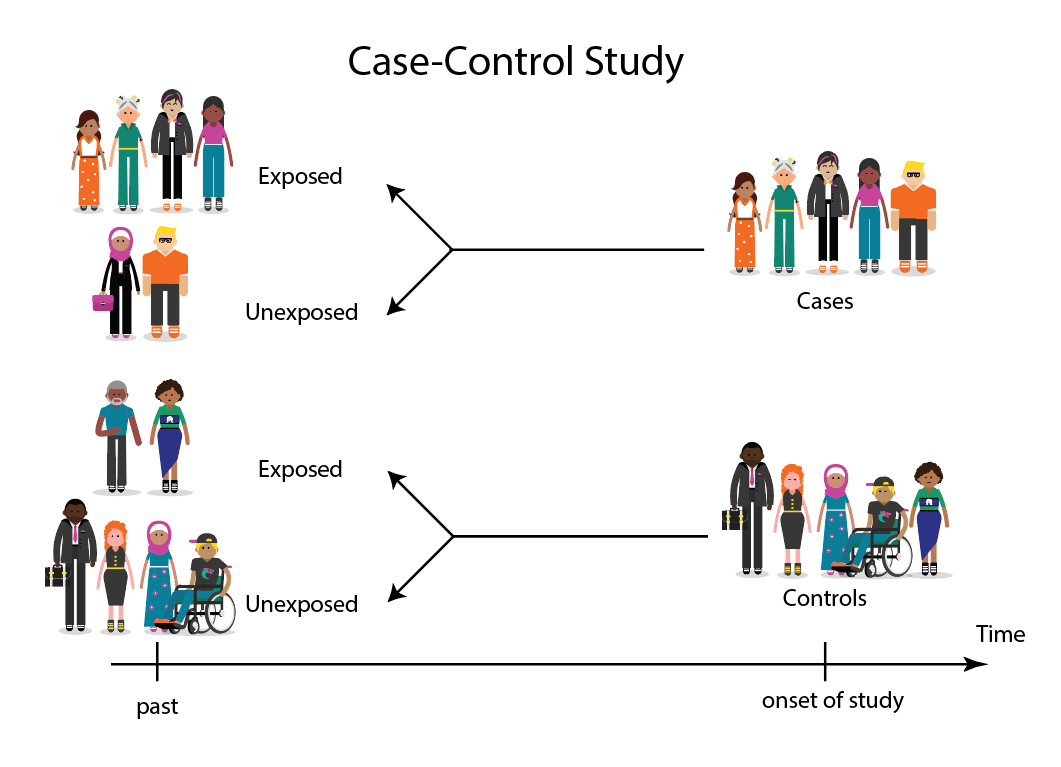

A case-control study looks at people who already have a certain condition (cases) and people who don’t (controls). By comparing these two groups, researchers try to figure out what might have caused the condition. They look into the past to find clues, like habits or experiences, that are different between the two groups.

The “cases” are the individuals with the disease or condition under study, and the “controls” are similar individuals without the disease or condition of interest.

The controls should have similar characteristics (i.e., age, sex, demographic, health status) to the cases to mitigate the effects of confounding variables .

Case-control studies identify any associations between an exposure and an outcome and help researchers form hypotheses about a particular population.

Researchers will first identify the two groups, and then look back in time to investigate which subjects in each group were exposed to the condition.

If the exposure is found more commonly in the cases than the controls, the researcher can hypothesize that the exposure may be linked to the outcome of interest.

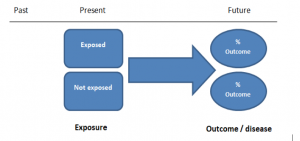

Figure: Schematic diagram of case-control study design. Kenneth F. Schulz and David A. Grimes (2002) Case-control studies: research in reverse . The Lancet Volume 359, Issue 9304, 431 – 434

Quick, inexpensive, and simple

Because these studies use already existing data and do not require any follow-up with subjects, they tend to be quicker and cheaper than other types of research. Case-control studies also do not require large sample sizes.

Beneficial for studying rare diseases

Researchers in case-control studies start with a population of people known to have the target disease instead of following a population and waiting to see who develops it. This enables researchers to identify current cases and enroll a sufficient number of patients with a particular rare disease.

Useful for preliminary research

Case-control studies are beneficial for an initial investigation of a suspected risk factor for a condition. The information obtained from cross-sectional studies then enables researchers to conduct further data analyses to explore any relationships in more depth.

Limitations

Subject to recall bias.

Participants might be unable to remember when they were exposed or omit other details that are important for the study. In addition, those with the outcome are more likely to recall and report exposures more clearly than those without the outcome.

Difficulty finding a suitable control group

It is important that the case group and the control group have almost the same characteristics, such as age, gender, demographics, and health status.

Forming an accurate control group can be challenging, so sometimes researchers enroll multiple control groups to bolster the strength of the case-control study.

Do not demonstrate causation

Case-control studies may prove an association between exposures and outcomes, but they can not demonstrate causation.

A case-control study is an observational study where researchers analyzed two groups of people (cases and controls) to look at factors associated with particular diseases or outcomes.

Below are some examples of case-control studies:

- Investigating the impact of exposure to daylight on the health of office workers (Boubekri et al., 2014).

- Comparing serum vitamin D levels in individuals who experience migraine headaches with their matched controls (Togha et al., 2018).

- Analyzing correlations between parental smoking and childhood asthma (Strachan and Cook, 1998).

- Studying the relationship between elevated concentrations of homocysteine and an increased risk of vascular diseases (Ford et al., 2002).

- Assessing the magnitude of the association between Helicobacter pylori and the incidence of gastric cancer (Helicobacter and Cancer Collaborative Group, 2001).

- Evaluating the association between breast cancer risk and saturated fat intake in postmenopausal women (Howe et al., 1990).

Frequently asked questions

1. what’s the difference between a case-control study and a cross-sectional study.

Case-control studies are different from cross-sectional studies in that case-control studies compare groups retrospectively while cross-sectional studies analyze information about a population at a specific point in time.

In cross-sectional studies , researchers are simply examining a group of participants and depicting what already exists in the population.

2. What’s the difference between a case-control study and a longitudinal study?

Case-control studies compare groups retrospectively, while longitudinal studies can compare groups either retrospectively or prospectively.

In a longitudinal study , researchers monitor a population over an extended period of time, and they can be used to study developmental shifts and understand how certain things change as we age.

In addition, case-control studies look at a single subject or a single case, whereas longitudinal studies can be conducted on a large group of subjects.

3. What’s the difference between a case-control study and a retrospective cohort study?

Case-control studies are retrospective as researchers begin with an outcome and trace backward to investigate exposure; however, they differ from retrospective cohort studies.

In a retrospective cohort study , researchers examine a group before any of the subjects have developed the disease, then examine any factors that differed between the individuals who developed the condition and those who did not.

Thus, the outcome is measured after exposure in retrospective cohort studies, whereas the outcome is measured before the exposure in case-control studies.

Boubekri, M., Cheung, I., Reid, K., Wang, C., & Zee, P. (2014). Impact of windows and daylight exposure on overall health and sleep quality of office workers: a case-control pilot study. Journal of Clinical Sleep Medicine: JCSM: Official Publication of the American Academy of Sleep Medicine, 10 (6), 603-611.

Ford, E. S., Smith, S. J., Stroup, D. F., Steinberg, K. K., Mueller, P. W., & Thacker, S. B. (2002). Homocyst (e) ine and cardiovascular disease: a systematic review of the evidence with special emphasis on case-control studies and nested case-control studies. International journal of epidemiology, 31 (1), 59-70.

Helicobacter and Cancer Collaborative Group. (2001). Gastric cancer and Helicobacter pylori: a combined analysis of 12 case control studies nested within prospective cohorts. Gut, 49 (3), 347-353.

Howe, G. R., Hirohata, T., Hislop, T. G., Iscovich, J. M., Yuan, J. M., Katsouyanni, K., … & Shunzhang, Y. (1990). Dietary factors and risk of breast cancer: combined analysis of 12 case—control studies. JNCI: Journal of the National Cancer Institute, 82 (7), 561-569.

Lewallen, S., & Courtright, P. (1998). Epidemiology in practice: case-control studies. Community eye health, 11 (28), 57–58.

Strachan, D. P., & Cook, D. G. (1998). Parental smoking and childhood asthma: longitudinal and case-control studies. Thorax, 53 (3), 204-212.

Tenny, S., Kerndt, C. C., & Hoffman, M. R. (2021). Case Control Studies. In StatPearls . StatPearls Publishing.

Togha, M., Razeghi Jahromi, S., Ghorbani, Z., Martami, F., & Seifishahpar, M. (2018). Serum Vitamin D Status in a Group of Migraine Patients Compared With Healthy Controls: A Case-Control Study. Headache, 58 (10), 1530-1540.

Further Information

- Schulz, K. F., & Grimes, D. A. (2002). Case-control studies: research in reverse. The Lancet, 359(9304), 431-434.

- What is a case-control study?

- Skip to secondary menu

- Skip to main content

- Skip to primary sidebar

Statistics By Jim

Making statistics intuitive

Case Control Study: Definition, Benefits & Examples

By Jim Frost 2 Comments

What is a Case Control Study?

A case control study is a retrospective, observational study that compares two existing groups. Researchers form these groups based on the existence of a condition in the case group and the lack of that condition in the control group. They evaluate the differences in the histories between these two groups looking for factors that might cause a disease.

By evaluating differences in exposure to risk factors between the case and control groups, researchers can learn which factors are associated with the medical condition.

For example, medical researchers study disease X and use a case-control study design to identify risk factors. They create two groups using available medical records from hospitals. Individuals with disease X are in the case group, while those without it are in the control group. If the case group has more exposure to a risk factor than the control group, that exposure is a potential cause for disease X. However, case-control studies establish only correlation and not causation. Be aware of spurious correlations!

Case-control studies are observational studies because researchers do not control the risk factors—they only observe them. They are retrospective studies because the scientists create the case and control groups after the outcomes for the subjects (e.g., disease vs. no disease) are known.

This post explains the benefits and limitations of case-control studies, controlling confounders, and analyzing and interpreting the results. I close with an example case control study showing how to calculate and interpret the results.

Learn more about Experimental Design: Definition, Types, and Examples .

Related posts : Observational Studies Explained and Control Groups in Experiments

Benefits of a Case Control Study

A case control study is a relatively quick and simple design. They frequently use existing patient data, and the experimenters form the groups after the outcomes are known. Researchers do not conduct an experiment. Instead, they look for differences between the case and control groups that are potential risk factors for the condition. Small groups and individual facilities can conduct case-control studies, unlike other more intensive types of experiments.

Case-control studies are perfect for evaluating outbreaks and rare conditions. Researchers simply need to let a sufficient number of known cases accumulate in an established database. The alternative would be to select a large random sample and hope that the condition afflicts it eventually.

A case control study can provide rapid results during outbreaks where the researchers need quick answers. They are ideal for the preliminary investigation phase, where scientists screen potential risk factors. As such, they can point the way for more thorough, time-consuming, and expensive studies. They are especially beneficial when the current state of science knows little about the connection between risk factors and the medical condition. And when you need to identify potential risk factors quickly!

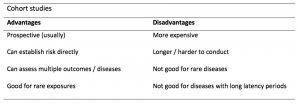

Cohort studies are another type of observational study that are similar to case-control studies, but there are some important differences. To learn more, read my post about Cohort Studies .

Limitations of a Case Control Study

Because case-control studies are observational, they cannot establish causality and provide lower quality evidence than other experimental designs, such as randomized controlled trials . Additionally, as you’ll see in the next section, this type of study is susceptible to confounding variables unless experimenters correctly match traits between the two groups.

A case-control study typically depends on health records. If the necessary data exist in sources available to the researchers, all is good. However, the investigation becomes more complicated if the data are not readily available.

Case-control studies can incorporate biases from the underlying data sources. For example, researchers frequently obtain patient data from hospital records. The population of hospital patients is likely to differ from the general population. Even the control patients are in the hospital for some reason—they likely have serious health problems. Consequently, the subjects in case-control studies are likely to differ from the general population, which reduces the generalizability of the results.

A case-control study cannot estimate incidence or prevalence rates for the disease. The data from these studies do not allow you to calculate the probability of a new person contracting the condition in a given period nor how common it is in the population. This limitation occurs because case-control studies do not use a representative sample.

Case-control studies cannot determine the time between exposure and onset of the medical condition. In fact, case-control studies cannot reliably assess each subject’s exposure to risk factors over time. Longitudinal studies, such as prospective cohort studies, can better make those types of assessment.

Related post : Causation versus Correlation in Statistics

Use Matching to Control Confounders

Because case-control studies are observational studies, they are particularly vulnerable to confounding variables and spurious correlations . A confounder correlates with both the risk factor and the outcome variable. Because observational studies don’t use random assignment to equalize confounders between the case and control groups, they can become unbalanced and affect the results.

Unfortunately, confounders can be the actual cause of the medical condition rather than the risk factor that the researchers identify. If a case-control study does not account for confounding variables, it can bias the results and make them untrustworthy.

Case-control studies typically use trait matching to control confounders. This technique involves selecting study participants for the case and control groups with similar characteristics, which helps equalize the groups for potential confounders. Equalizing confounders limits their impact on the results.

Ultimately, the goal is to create case and control groups that have equal risks for developing the condition/disease outside the risk factors the researchers are explicitly assessing. Matching facilitates valid comparisons between the two groups because the controls are similar to cases. The researchers use subject-area knowledge to identify characteristics that are critical to match.

Note that you cannot assess matching variables as potential risk factors. You’ve intentionally equalized them across the case and control groups and, consequently, they do not correlate with the condition. Hence, do not use the risk factors you want to evaluate as trait matching variables.

Learn more about confounding variables .

Statistical Analysis of a Case Control Study

Researchers frequently include two controls for each case to increase statistical power for a case-control study. Adding even more controls per case provides few statistical benefits, so studies usually do not use more than a 2:1 control to case ratio.

For statistical results, case-control studies typically produce an odds ratio for each potential risk factor. The equation below shows how to calculate an odds ratio for a case-control study.

Notice how this ratio takes the exposure odds in the case group and divides it by the exposure odds in the control group. Consequently, it quantifies how much higher the odds of exposure are among cases than the controls.

In general, odds ratios greater than one flag potential risk factors because they indicate that exposure was higher in the case group than in the control group. Furthermore, higher ratios signify stronger associations between exposure and the medical condition.

An odds ratio of one indicates that exposure was the same in the case and control groups. Nothing to see here!

Ratios less than one might identify protective factors.

Learn more about Understanding Ratios .

Now, let’s bring this to life with an example!

Example Odds Ratio in a Case-Control Study

The Kent County Health Department in Michigan conducted a case-control study in 2005 for a company lunch that produced an outbreak of vomiting and diarrhea. Out of multiple lunch ingredients, researchers found the following exposure rates for lettuce consumption.

| 53 | 33 | |

| 1 | 7 |

By plugging these numbers into the equation, we can calculate the odds ratio for lettuce in this case-control study.

The study determined that the odds ratio for lettuce is 11.2.

This ratio indicates that those with symptoms were 11.2 times more likely to have eaten lettuce than those without symptoms. These results raise a big red flag for contaminated lettuce being the culprit!

Learn more about Odds Ratios.

Epidemiology in Practice: Case-Control Studies (NIH)

Interpreting Results of Case-Control Studies (CDC)

Share this:

Reader Interactions

January 18, 2022 at 7:56 am

Great post, thanks for writing it!

Is it possible to test an odds ration for statistical significance?

January 18, 2022 at 7:41 pm

Hi Michael,

Thanks! And yes, you can test for significance. To learn more about that, read my post about odds ratios , where I discuss p-values and confidence intervals.

Comments and Questions Cancel reply

Study Design 101: Case Control Study

- Case Report

- Case Control Study

- Cohort Study

- Randomized Controlled Trial

- Practice Guideline

- Systematic Review

- Meta-Analysis

- Helpful Formulas

- Finding Specific Study Types

A study that compares patients who have a disease or outcome of interest (cases) with patients who do not have the disease or outcome (controls), and looks back retrospectively to compare how frequently the exposure to a risk factor is present in each group to determine the relationship between the risk factor and the disease.

Case control studies are observational because no intervention is attempted and no attempt is made to alter the course of the disease. The goal is to retrospectively determine the exposure to the risk factor of interest from each of the two groups of individuals: cases and controls. These studies are designed to estimate odds.

Case control studies are also known as "retrospective studies" and "case-referent studies."

- Good for studying rare conditions or diseases

- Less time needed to conduct the study because the condition or disease has already occurred

- Lets you simultaneously look at multiple risk factors

- Useful as initial studies to establish an association

- Can answer questions that could not be answered through other study designs

Disadvantages

- Retrospective studies have more problems with data quality because they rely on memory and people with a condition will be more motivated to recall risk factors (also called recall bias).

- Not good for evaluating diagnostic tests because it's already clear that the cases have the condition and the controls do not

- It can be difficult to find a suitable control group

Design pitfalls to look out for

Care should be taken to avoid confounding, which arises when an exposure and an outcome are both strongly associated with a third variable. Controls should be subjects who might have been cases in the study but are selected independent of the exposure. Cases and controls should also not be "over-matched."

Is the control group appropriate for the population? Does the study use matching or pairing appropriately to avoid the effects of a confounding variable? Does it use appropriate inclusion and exclusion criteria?

Fictitious Example

There is a suspicion that zinc oxide, the white non-absorbent sunscreen traditionally worn by lifeguards is more effective at preventing sunburns that lead to skin cancer than absorbent sunscreen lotions. A case-control study was conducted to investigate if exposure to zinc oxide is a more effective skin cancer prevention measure. The study involved comparing a group of former lifeguards that had developed cancer on their cheeks and noses (cases) to a group of lifeguards without this type of cancer (controls) and assess their prior exposure to zinc oxide or absorbent sunscreen lotions.

This study would be retrospective in that the former lifeguards would be asked to recall which type of sunscreen they used on their face and approximately how often. This could be either a matched or unmatched study, but efforts would need to be made to ensure that the former lifeguards are of the same average age, and lifeguarded for a similar number of seasons and amount of time per season.

Real-life Examples

Boubekri, M., Cheung, I., Reid, K., Wang, C., & Zee, P. (2014). Impact of windows and daylight exposure on overall health and sleep quality of office workers: a case-control pilot study. Journal of Clinical Sleep Medicine : JCSM : Official Publication of the American Academy of Sleep Medicine, 10 (6), 603-611. https://doi.org/10.5664/jcsm.3780

This pilot study explored the impact of exposure to daylight on the health of office workers (measuring well-being and sleep quality subjectively, and light exposure, activity level and sleep-wake patterns via actigraphy). Individuals with windows in their workplaces had more light exposure, longer sleep duration, and more physical activity. They also reported a better scores in the areas of vitality and role limitations due to physical problems, better sleep quality and less sleep disturbances.

Togha, M., Razeghi Jahromi, S., Ghorbani, Z., Martami, F., & Seifishahpar, M. (2018). Serum Vitamin D Status in a Group of Migraine Patients Compared With Healthy Controls: A Case-Control Study. Headache, 58 (10), 1530-1540. https://doi.org/10.1111/head.13423

This case-control study compared serum vitamin D levels in individuals who experience migraine headaches with their matched controls. Studied over a period of thirty days, individuals with higher levels of serum Vitamin D was associated with lower odds of migraine headache.

Related Formulas

- Odds ratio in an unmatched study

- Odds ratio in a matched study

Related Terms

A patient with the disease or outcome of interest.

Confounding

When an exposure and an outcome are both strongly associated with a third variable.

A patient who does not have the disease or outcome.

Matched Design

Each case is matched individually with a control according to certain characteristics such as age and gender. It is important to remember that the concordant pairs (pairs in which the case and control are either both exposed or both not exposed) tell us nothing about the risk of exposure separately for cases or controls.

Observed Assignment

The method of assignment of individuals to study and control groups in observational studies when the investigator does not intervene to perform the assignment.

Unmatched Design

The controls are a sample from a suitable non-affected population.

Now test yourself!

1. Case Control Studies are prospective in that they follow the cases and controls over time and observe what occurs.

a) True b) False

2. Which of the following is an advantage of Case Control Studies?

a) They can simultaneously look at multiple risk factors. b) They are useful to initially establish an association between a risk factor and a disease or outcome. c) They take less time to complete because the condition or disease has already occurred. d) b and c only e) a, b, and c

Evidence Pyramid - Navigation

- Meta- Analysis

- Case Reports

- << Previous: Case Report

- Next: Cohort Study >>

- Last Updated: Sep 25, 2023 10:59 AM

- URL: https://guides.himmelfarb.gwu.edu/studydesign101

- Himmelfarb Intranet

- Privacy Notice

- Terms of Use

- GW is committed to digital accessibility. If you experience a barrier that affects your ability to access content on this page, let us know via the Accessibility Feedback Form .

- Himmelfarb Health Sciences Library

- 2300 Eye St., NW, Washington, DC 20037

- Phone: (202) 994-2850

- [email protected]

- https://himmelfarb.gwu.edu

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

- My Bibliography

- Collections

- Citation manager

Save citation to file

Email citation, add to collections.

- Create a new collection

- Add to an existing collection

Add to My Bibliography

Your saved search, create a file for external citation management software, your rss feed, case control studies, affiliations.

- 1 University of Nebraska Medical Center

- 2 Spectrum Health/Michigan State University College of Human Medicine

- PMID: 28846237

- Bookshelf ID: NBK448143

A case-control study is a type of observational study commonly used to look at factors associated with diseases or outcomes. The case-control study starts with a group of cases, which are the individuals who have the outcome of interest. The researcher then tries to construct a second group of individuals called the controls, who are similar to the case individuals but do not have the outcome of interest. The researcher then looks at historical factors to identify if some exposure(s) is/are found more commonly in the cases than the controls. If the exposure is found more commonly in the cases than in the controls, the researcher can hypothesize that the exposure may be linked to the outcome of interest.

For example, a researcher may want to look at the rare cancer Kaposi's sarcoma. The researcher would find a group of individuals with Kaposi's sarcoma (the cases) and compare them to a group of patients who are similar to the cases in most ways but do not have Kaposi's sarcoma (controls). The researcher could then ask about various exposures to see if any exposure is more common in those with Kaposi's sarcoma (the cases) than those without Kaposi's sarcoma (the controls). The researcher might find that those with Kaposi's sarcoma are more likely to have HIV, and thus conclude that HIV may be a risk factor for the development of Kaposi's sarcoma.

There are many advantages to case-control studies. First, the case-control approach allows for the study of rare diseases. If a disease occurs very infrequently, one would have to follow a large group of people for a long period of time to accrue enough incident cases to study. Such use of resources may be impractical, so a case-control study can be useful for identifying current cases and evaluating historical associated factors. For example, if a disease developed in 1 in 1000 people per year (0.001/year) then in ten years one would expect about 10 cases of a disease to exist in a group of 1000 people. If the disease is much rarer, say 1 in 1,000,0000 per year (0.0000001/year) this would require either having to follow 1,000,0000 people for ten years or 1000 people for 1000 years to accrue ten total cases. As it may be impractical to follow 1,000,000 for ten years or to wait 1000 years for recruitment, a case-control study allows for a more feasible approach.

Second, the case-control study design makes it possible to look at multiple risk factors at once. In the example above about Kaposi's sarcoma, the researcher could ask both the cases and controls about exposures to HIV, asbestos, smoking, lead, sunburns, aniline dye, alcohol, herpes, human papillomavirus, or any number of possible exposures to identify those most likely associated with Kaposi's sarcoma.

Case-control studies can also be very helpful when disease outbreaks occur, and potential links and exposures need to be identified. This study mechanism can be commonly seen in food-related disease outbreaks associated with contaminated products, or when rare diseases start to increase in frequency, as has been seen with measles in recent years.

Because of these advantages, case-control studies are commonly used as one of the first studies to build evidence of an association between exposure and an event or disease.

In a case-control study, the investigator can include unequal numbers of cases with controls such as 2:1 or 4:1 to increase the power of the study.

Disadvantages and Limitations

The most commonly cited disadvantage in case-control studies is the potential for recall bias. Recall bias in a case-control study is the increased likelihood that those with the outcome will recall and report exposures compared to those without the outcome. In other words, even if both groups had exactly the same exposures, the participants in the cases group may report the exposure more often than the controls do. Recall bias may lead to concluding that there are associations between exposure and disease that do not, in fact, exist. It is due to subjects' imperfect memories of past exposures. If people with Kaposi's sarcoma are asked about exposure and history (e.g., HIV, asbestos, smoking, lead, sunburn, aniline dye, alcohol, herpes, human papillomavirus), the individuals with the disease are more likely to think harder about these exposures and recall having some of the exposures that the healthy controls.

Case-control studies, due to their typically retrospective nature, can be used to establish a correlation between exposures and outcomes, but cannot establish causation . These studies simply attempt to find correlations between past events and the current state.

When designing a case-control study, the researcher must find an appropriate control group. Ideally, the case group (those with the outcome) and the control group (those without the outcome) will have almost the same characteristics, such as age, gender, overall health status, and other factors. The two groups should have similar histories and live in similar environments. If, for example, our cases of Kaposi's sarcoma came from across the country but our controls were only chosen from a small community in northern latitudes where people rarely go outside or get sunburns, asking about sunburn may not be a valid exposure to investigate. Similarly, if all of the cases of Kaposi's sarcoma were found to come from a small community outside a battery factory with high levels of lead in the environment, then controls from across the country with minimal lead exposure would not provide an appropriate control group. The investigator must put a great deal of effort into creating a proper control group to bolster the strength of the case-control study as well as enhance their ability to find true and valid potential correlations between exposures and disease states.

Similarly, the researcher must recognize the potential for failing to identify confounding variables or exposures, introducing the possibility of confounding bias, which occurs when a variable that is not being accounted for that has a relationship with both the exposure and outcome. This can cause us to accidentally be studying something we are not accounting for but that may be systematically different between the groups.

Copyright © 2024, StatPearls Publishing LLC.

PubMed Disclaimer

Conflict of interest statement

Disclosure: Steven Tenny declares no relevant financial relationships with ineligible companies.

Disclosure: Connor Kerndt declares no relevant financial relationships with ineligible companies.

Disclosure: Mary Hoffman declares no relevant financial relationships with ineligible companies.

- Introduction

- Issues of Concern

- Clinical Significance

- Enhancing Healthcare Team Outcomes

- Review Questions

Similar articles

- Folic acid supplementation and malaria susceptibility and severity among people taking antifolate antimalarial drugs in endemic areas. Crider K, Williams J, Qi YP, Gutman J, Yeung L, Mai C, Finkelstain J, Mehta S, Pons-Duran C, Menéndez C, Moraleda C, Rogers L, Daniels K, Green P. Crider K, et al. Cochrane Database Syst Rev. 2022 Feb 1;2(2022):CD014217. doi: 10.1002/14651858.CD014217. Cochrane Database Syst Rev. 2022. PMID: 36321557 Free PMC article.

- Epidemiology Of Study Design. Munnangi S, Boktor SW. Munnangi S, et al. 2023 Apr 24. In: StatPearls [Internet]. Treasure Island (FL): StatPearls Publishing; 2024 Jan–. 2023 Apr 24. In: StatPearls [Internet]. Treasure Island (FL): StatPearls Publishing; 2024 Jan–. PMID: 29262004 Free Books & Documents.

- Risk factors for Kaposi's sarcoma in HIV-positive subjects in Uganda. Ziegler JL, Newton R, Katongole-Mbidde E, Mbulataiye S, De Cock K, Wabinga H, Mugerwa J, Katabira E, Jaffe H, Parkin DM, Reeves G, Weiss R, Beral V. Ziegler JL, et al. AIDS. 1997 Nov;11(13):1619-26. doi: 10.1097/00002030-199713000-00011. AIDS. 1997. PMID: 9365767

- Epidemiology of Kaposi's sarcoma. Beral V. Beral V. Cancer Surv. 1991;10:5-22. Cancer Surv. 1991. PMID: 1821323 Review.

- The epidemiology of classic, African, and immunosuppressed Kaposi's sarcoma. Wahman A, Melnick SL, Rhame FS, Potter JD. Wahman A, et al. Epidemiol Rev. 1991;13:178-99. doi: 10.1093/oxfordjournals.epirev.a036068. Epidemiol Rev. 1991. PMID: 1765111 Review.

- Setia MS. Methodology Series Module 2: Case-control Studies. Indian J Dermatol. 2016 Mar-Apr;61(2):146-51. - PMC - PubMed

- Sedgwick P. Bias in observational study designs: case-control studies. BMJ. 2015 Jan 30;350:h560. - PubMed

- Groenwold RHH, van Smeden M. Efficient Sampling in Unmatched Case-Control Studies When the Total Number of Cases and Controls Is Fixed. Epidemiology. 2017 Nov;28(6):834-837. - PubMed

Publication types

- Search in PubMed

- Search in MeSH

- Add to Search

Related information

Linkout - more resources, full text sources.

- NCBI Bookshelf

Research Materials

- NCI CPTC Antibody Characterization Program

Miscellaneous

- NCI CPTAC Assay Portal

- Citation Manager

NCBI Literature Resources

MeSH PMC Bookshelf Disclaimer

The PubMed wordmark and PubMed logo are registered trademarks of the U.S. Department of Health and Human Services (HHS). Unauthorized use of these marks is strictly prohibited.

- Search Menu

- Sign in through your institution

- Supplements

- Cohort Profiles

- Education Corner

- Author Guidelines

- Submission Site

- Open Access

- About the International Journal of Epidemiology

- About the International Epidemiological Association

- Editorial Team

- Editorial Board

- Advertising and Corporate Services

- Journals Career Network

- Self-Archiving Policy

- Dispatch Dates

- Contact the IEA

- Journals on Oxford Academic

- Books on Oxford Academic

Article Contents

Introduction, case–control studies in dynamic populations, case–control studies within cohorts, unity of the concept of density sampling from dynamic populations and sampling from cohorts, discussion: differences with classic case–control teaching, and consequences.

- < Previous

Case–control studies: basic concepts

- Article contents

- Figures & tables

- Supplementary Data

Jan P Vandenbroucke, Neil Pearce, Case–control studies: basic concepts, International Journal of Epidemiology , Volume 41, Issue 5, October 2012, Pages 1480–1489, https://doi.org/10.1093/ije/dys147

- Permissions Icon Permissions

The purpose of this article is to present in elementary mathematical and statistical terms a simple way to quickly and effectively teach and understand case–control studies, as they are commonly done in dynamic populations—without using the rare disease assumption. Our focus is on case–control studies of disease incidence (‘incident case–control studies’); we will not consider the situation of case–control studies of prevalent disease, which are published much less frequently.

Readers of the medical literature were once taught that case–control studies are ‘cohort studies in reverse’, in which persons who developed disease during follow-up are compared with persons who did not. In addition, they were told that the odds ratio calculated from case–control studies is an approximation of the risk ratio or rate ratio, but only if the disease is ‘rare’ (say, if <5% of the population develops disease). These notions are no longer compatible with present-day epidemiological theory of case–control studies which is based on ‘density sampling’. Moreover, a recent survey found that the large majority of case–control studies do not sample cases and control subjects from a cohort with fixed membership; rather, they sample from dynamic populations with variable membership. 1 Of all case–control studies involving incident cases, 82% sampled from a dynamic population; only 18% of studies sampled from a cohort, and only some of these may need the ‘rare disease assumption’ (depending on how the control subjects were sampled). Thus, the ‘rare disease assumption’ is not needed for the large majority of published case–control studies. In addition, different assumptions are needed for case–control studies in dynamic populations and those in cohorts to ensure that the odds ratios are estimates of ratios of incidence rates.

The underlying theory for case–control studies in dynamic populations has been developed in epidemiological and statistical journals and textbooks over several decades, 2–19 and its history has been described. 20 Still, the theory is not well known or well understood outside professional epidemiological and statistical circles. Introductory textbooks of epidemiology often fall back on methods of control sampling, which involve the ‘rare disease assumption’ as it was proposed by Cornfield in 1951, 3 because it seems easier to explain. 1 Moreover, several advanced textbooks or articles depict the different ways of sampling cases and control subjects from the point of view of a cohort with fixed membership. 13 , 18 This reinforces the view of case–control studies as constructed within a cohort, even though this applies to only a small minority of published case–control studies.

The purpose of this article is to present in elementary mathematical and statistical terms a simple way to quickly and effectively teach and understand case–control studies as they are commonly done in dynamic populations––without using the rare disease assumption. Our focus is on case–control studies of disease incidence (‘incident case–control studies’); we will not consider the situation of case–control studies of prevalent disease, which are published much less frequently, 1 except in certain situations as discussed by Pearce 21 (e.g. for diseases such as asthma in which it is difficult to identify incident cases).

The theory of case–control studies in dynamic populations cannot be explained before first going back to the calculation of incidence rates and risks in dynamic populations. In a previous article, we have reviewed the demographic concepts that underpin these calculations. 22 In the current article, these concepts will first be applied to case–control studies involving sampling from dynamic populations. Second, we discuss how to teach the theory in the situation of sampling from a cohort. In the third part, it is explained how these two distinct ways of sampling cases and control subjects can be unified conceptually in the proportional hazards model (Cox regression). Finally, we discuss the consequences of this way of teaching case–control studies for understanding the assumptions behind these studies, and for appropriately designing studies. We propose that the explanation of case–control studies within dynamic populations should become the basis for teaching case–control studies, in both introductory and more advanced courses.

Basic teaching

To understand the application of the basic concepts of incidence rate calculations to case–control studies, we start with the demographic perspective of a dynamic population in which we calculate and compare incidence rates of disease. 22

Suppose that investigators are interested in the effect of oral contraceptive use on the incidence of myocardial infarction among women of reproductive age. They might investigate this in a large town in a particular calendar year (we base this example loosely on one of the first case–control studies that investigated this association 23 ). The time-population structure of the study is depicted in Figure 1 .

The underlying dynamic ‘source’ population of a study of myocardial infarction (MI) and oral contraceptive use. The bold undulating lines show the fluctuating number of users and non-users of oral contraceptives in a population that is in a steady state. The finer lines below it depict individuals who enter and leave the populations of users and non-users. Closed circles indicate cases of MI emanating from the population. For users and non-users separately, an incidence rate (IR) of MI can be calculated. The incidence rate ratio (IRR) can be used to compare the incidence of MI between users and non-users. In the description of the example in the text, the time t was set to one calendar year. Figure adapted from Miettinen 9

In Figure 1 , for the sake of simplicity, imagine that, on average, 120 000 young women of reproductive age (between ages 15 and 45 years) who have never had coronary heart disease (CHD), are living in the town, on each day during the calendar year of investigation. This is a dynamic population: each day, new young women will become 15 years old, others will turn 46, some will leave town and others will come to live in the town, some will develop CHD and be replaced by others who do not have the disease and so forth. Such a population can be safely regarded as being ‘in steady state’. The demographic principle of a steady-state population was explained in our previous article; 22 in brief, it assumes that over a small period, e.g. a calendar year, the number of people in a population is approximately constant from day to day because the population is constantly depleted and replenished at about the same rate. It was also explained why this assumption holds, even if the population is not perfectly in a steady state. 22 Thus, we take it that each day of the year, ∼120 000 women of reproductive age, free of clinically recognized CHD, live in the town. Suppose that, on average, 40 000 women use oral contraceptives and 80 000 do not. Again, these are two dynamic subpopulations that can be regarded as being in a steady state. Women start and stop using oral contraceptives for various reasons and switch from use to non-use and back again. As such, in one calendar year, we have 40 000 woman-years of pill use and 80 000 woman-years of non-use, free of CHD.

Suppose that a group of investigators surveys all coronary care units in the town each week to identify all women, aged 15–45 years, admitted with acute myocardial infarction during that period. When a young woman is admitted, the investigators enquire whether she was on the pill––and whether she had previously had a coronary event (if she had, she is excluded from the study). Suppose that, in total, 12 women were admitted for first myocardial infarction during the year of study: eight pill users and four non-users. That produces an incidence rate of 8/40 000 woman-years among pill users and 4/80 000 woman-years among non-users. The ratio of these incidence rates becomes (8/40 000 woman-years)/(4/80 000 woman-years), which is a rate ratio of 4, indicating that women on the pill have an incidence rate of myocardial infarction that is four times that of those not on the pill.

Transformation to a case–control study

In total, 12 cases arise from the population: eight users and four non-users. Those are the potential cases for a case–control study in which the investigators would survey all coronary care units each week of the year. Suppose that the investigators, as their next step, would take a random sample of 600 control subjects from the total source population of the cases (the total of 120 000), by asking 600 women aged 15–45 years, without previous CHD, whether they are ‘on the pill’ at the time the question is asked. Then, on whatever day of the year, this sample of control subjects will include, on average, 200 users and 400 non-users of oral contraceptives. These numbers represent the underlying distribution of woman-years of users and non-users. Together with the cases, this is the complete case–control study (see Table 1 ).

Layout of case-control data sampled from dynamic population: study of occurrence of myocardial infarction in users vs non-users of oral contraceptives, corresponding to Figure 1

| . | Myocardial infarction . | Control subjects . |

|---|---|---|

| Oral contraceptive use | ||

| Yes | 8 | 200 |

| No | 4 | 400 |

| Odds ratio | 4 | |

| . | Myocardial infarction . | Control subjects . |

|---|---|---|

| Oral contraceptive use | ||

| Yes | 8 | 200 |

| No | 4 | 400 |

| Odds ratio | 4 | |

From Table 1 , an odds ratio can be calculated as (8 × 400)/(4 × 200). This exactly equals the ratio of the incidence rates in the underlying population. Algebraically: the incidence rate ratio from the complete dynamic population, which we calculated earlier, can be easily rewritten as (8/4)/(40 000 woman-years/80 000 woman-years). Between parentheses in the numerator of this formula is the number of pill users divided by the number of non-users among all women newly admitted with CHD (= cases in the case–control study). In the denominator, we find the proportion of woman-years on the pill divided by the proportion of woman-years of non-use. It is immediately obvious that—if the steady-state assumption holds—we can estimate the latter proportion directly from the sample of 600 women (= control subjects in case–control study). Among the 600 control subjects, the ratio of exposed to unexposed is expected to be the same as the ratio of the woman-years—except for sampling fluctuations. Thus, what we do in a case–control study is to replace the denominator ratio (40 000 woman-years /80 000 woman-years) by a sample (200/400). We still obtain, on average, the same rate ratio of 4. It follows that to estimate the rate ratio, we do not have to measure, nor to estimate, all the person-years of pill-using and non-using women in town; we can simply determine the ratio of those woman-years by asking a representative sample of women free of CHD from the population from which the cases arise, about their pill use. The complete dynamic population is called the ‘source population’ from which we identify the cases and the sample of control subjects, and the period over which cases and control subjects are identified is the ‘time window’ of observation, also called the ‘risk period’.

The ‘odds ratio’ which is calculated from Table 1 is technically also known as the ‘exposure odds ratio’, as it is the ‘odds of exposure’ in the cases divided by the ‘odds of exposure’ in the controls: (8/4)/(200/400) = 4, the same as the ratio of incidence rates in the whole source population. The great advantage of case–control studies is that we can calculate relative incidences of disease in a population, by collecting all the data for the numerator (by collecting cases in hospitals or registries where they naturally come together), and sampling control subjects from the denominator, i.e. sampling ‘control subjects’ to estimate the relative proportions (exposed vs non-exposed) of the person-years of the exposure of interest in the source population. Thus, one achieves the same result as in a comprehensive population follow-up, at much less expense of time and money. Just imagine the effort of having to do a follow-up study of all 120 000 women of reproductive age in town, also keeping track of when they move in and out of town and constantly updating their oral contraceptive use in a particular calendar year!

Advanced teaching

Cohorts vs dynamic populations.

For researchers who are used to think in terms of clinical cohorts, it can be difficult to understand that populations are not depleted: is it not true that the people with a particular risk factor will develop some disease more often, and thus in the course of time, there will be less of them who are still candidates for developing the disease? That will be true in cohorts because their membership is fixed, but not in dynamic populations. One way to understand this is to think of genetic exposures. People with blood group O develop clotting disorders more frequently, whereas people with blood group A develop more often gastric cancer. However, in a dynamic population, the numbers of people with blood group O or A are not constantly depleted—blood group distribution is fairly constant over time, as new people are born with these blood groups so that an equilibrium is maintained. 22

Another way to understand this concept is to think about an imaginary town and the cases of myocardial infarction that are enrolled in a study. For the aforementioned discussion, we assumed that we were studying all women living in a town during some time over the course of one calendar year (this could be the whole year or a few months). The situation would be entirely different if we restricted our study to all women who lived in the town on the 1 January of that year: then we would only count the myocardial infarctions that happened during this year in women who had been living in town on the 1 January; indeed, the number of women on the pill might decline more than the number of women not on the pill because the myocardial infarctions predominantly occur in the users. That situation would be akin to a clinical cohort study, i.e a study with fixed membership defined by a single common event. 22 However, in a dynamic population, a myocardial infarction that happens in a woman who moved into town during the year also counts in the numerator; she and the other women who move into town replenish the denominator because other women move out. By and large, as with blood groups, the population denominator remains constant in terms of its exposure distributions: the woman-years of oral contraceptive use vs non-use. If the population is truly in steady state, it does not matter when the control subjects are sampled—at the beginning, at the end or at the halfway point of the calendar period (the time window or ‘risk period’).

To refine the concept, the members of a dynamic population do not necessarily have to be present for long periods in the population—as might be surmised from the examples about towns and countries of which one is either an inhabitant or not, and usually for several years. Members of a dynamic population may also switch continuously between being in and out of the population. 22 Take a study on car accidents and mobile phone use by the driver. The risk periods of interest are the periods when people drive. The exposure of interest is phone use. In a case–control study, car accidents are sampled, and it is ascertained (say, via mobile phone operators) whether the driver was phoning at the time of the accident. Control moments might be sampled from the same driver (say, in the previous week) or from other drivers, by sampling other moments of time when they were driving; for each of these control moments, it might be ascertained, via the same mechanism as for the cases, whether they were phoning while driving. These control moments are contrasted with the moment of the accident (the case). If the same driver is used as his or her own control, this type of case–control study is called a ‘case–crossover study’. 24 From the example, it can be understood readily that such a case–control study compares the incidence rate of accidents while driving and phoning vs the incidence rate of accidents while driving and not phoning. 25

What if the exposure distribution of the population is not in steady state?

But what if the exposure distribution in the population is not in steady state? For example, suppose that one wants to investigate in a case–control study whether two different types of oral contraceptives give a different risk of venous thrombosis: ‘third-generation oral contraceptives’ vs ‘second-generation oral contraceptives’ (this was once a real and hotly debated question 26 ). Suppose further that the newer ‘third-generation oral contraceptives’ are strongly marketed, and that their market share clearly increases in the course of the calendar year. That situation is depicted in Figure 2 .

Sampling from the middle of the ‘risk period’ when the exposure distribution is not in steady state. The bold undulating lines show the increasing use of one type of oral contraceptives and the decreasing use of the other type during the time period (risk period). The finer lines below it depict individuals who enter and leave the populations of users of these types of oral contraceptives. Closed circles indicate cases of deep venous thrombosis (DVT) emanating from the population. B and D represent the numbers of users of one type or the other contraceptive at a cross-section in the middle of the time period. Incidence rates (IRs) of DVT can be calculated for both populations separately, and an incidence rate ratio (IRR) can be used to compare these two incidence rates. In a case–control study, B and D are estimated by ‘b’ and ‘d’, the numbers of users of one type or the other type of oral contraceptives in a sample from the source population taken in the middle of the period. The algebraic redrafting of the IRR shows that a ratio of IRs is algebraically equivalent to an ‘exposure odds ratio’ or the ‘cross-product’ that is obtained in a case–control study

There are two solutions:

Sample the control subjects in the middle of the period when the cases accrued, and thereby use the additional assumption that the rise (or fall) of the use of a particular brand of pill is roughly linear over the risk period. Then the control subjects will still represent the average proportion of person-years over the risk period. This is depicted in Figure 2 and is the same solution as is used to calculate person-years (i.e. the denominator) when populations are not in steady state [see previous article on the calculation of incidence rates for explanation]. 22 Alternatively, if one assumes that the incident cases in the dynamic population are evenly spread over time, one might sample control subjects evenly over time.

The more sophisticated solution is the one that researchers often use spontaneously: they sample a (number of) control subject(s) each time there is a case, which amounts to ‘matching on calendar time’. Then the control subject(s) will reflect the underlying population distribution of exposure at each point in time a case occurs, and any assumption about linearity is not needed. This is the most exact solution and is represented in Figure 3 . Matching on calendar time can be done in two ways: (i) invite the control subject(s) around the same calendar date as the case and ask them about their exposure (at that time or at previous times if exposure has a lag time to produce disease); or (ii) if control subjects are invited at a later point in time, present them with an ‘index date’, which is the date as the event of the matching case, and question them and/or measure their exposures for that index date. If control subjects are matched on calendar time, then it is appropriate to take the time matching (and, of course, any other matching factors) into account in the analysis, or at least to check whether it is necessary to control for them.

Case–control sampling in dynamic populations when a control is sampled each time a case occurs: matching on calendar time. Persons move in or out of the population by mechanisms such as birth or death, or move in or out from this population to another. Person-time is indicated by horizontal lines. The time axis is calendar time. The sampling of the control subjects is ‘matched on calendar time’: each time a case occurs, one or more control subjects are sampled. Cases and control subjects can be either exposed or unexposed (not shown here). A person who will become a case can be a control subject earlier, and multiple control subjects or even a variable number of control subjects can be drawn for each case

Hospital-based case–control studies

In most examples presented earlier, the patients are assumed to be sampled from a defined geographical population (via disease registries or by having access to all hospitals of some region), and control subjects are sampled from the underlying dynamic population of this geographical area. If cases from a case–control investigation are sampled from one or more hospitals that do not reflect a well-defined geographic population, still each hospital has a ‘catchment population’, consisting of the patients who will be admitted to that hospital when they develop a particular disease. Such a catchment population can be seen as a dynamic population, with inflow and outflow depending on patient and referring doctor preferences, religious or insurance affiliations, or on the reputation of a particular hospital for particular diseases and so forth. To obtain control subjects for such cases, the investigator should consider patients who are admitted to the same hospital and come from the same catchment population—meaning that if they had developed the case disease, they would have been admitted to that same hospital. This approach obviously has some risks in that the control disease may be associated with the exposure that one wants to study; that risk can (it is hoped) be minimized by using a mix of control diseases, none of which is known to be associated with the exposure under study. 27 Still, the principle of sampling control subjects from a dynamic population remains the same, whether the controls are population-based or hospital-based.

The early case–control study on oral contraceptives and myocardial infarction, which inspired the example presented earlier, sampled cases from a number of coronary care units that were surveyed in one geographically defined hospital area in the UK; for each case interviewed, three women of the same age who were discharged after some acute or elective medical or surgical condition were similarly interviewed about their use of oral contraceptives. 23 Likewise, the first case–control studies on smoking and lung cancer were hospital-based, and control subjects were non-cancer patients being present in the same wards or the same hospital as the lung cancer patients. 2 , 28

Doing a case–control study by sampling from a cohort with fixed membership is relatively rare—a recent survey found that it only occurs in 18% of published case–control studies. 1 It is mostly done when investigators have data available from a cohort, and when it is too expensive to go back and assess the exposures of everybody in the cohort. For example, in an occupational cohort study, personnel records may be available for all cohort members from date of employment, but it may take a considerable amount of work to assess these work histories and estimate cumulative exposures to particular chemicals, whether by using a job-exposure matrix or by an expert panel assessment. 29

Another example is the ‘re-use’ of data or samples from a randomized controlled trial (RCT) for a subsequent investigation. For example, the data from the ‘Physician’s Health Study’ 30 were re-used several years after the trial was finished for a new genetic case–control study; baseline blood samples of participants who developed cardiovascular end points in the trial were used, as were blood samples of matched participants in the trial who remained free of those diseases, and the frequency of one genetic factor (Factor V Leiden) was compared between these cases and control subjects. This investigation thereby considered the trial data as a single cohort in which new exposures were assessed, irrespective of the original randomization.

Figure 4 depicts a cohort with fixed membership from time 0. The cases accrue in the course of the follow-up in the exposed and unexposed part of the cohort. The available cohort data may only relate to exposure status at baseline (as in the aforementioned RCT example), but may also indicate changes in exposure over time, for example, if repeated measurements were done in the cohort study, or if time-related exposure information can be assessed from personnel records, prescribing records or other sources (as in the occupational example).

| Measure . | Definition . | Alternative formulation . |

|---|---|---|

| Odds ratio under exclusive sampling | ||

| Risk ratio under inclusive sampling |

Figure refers to methods 1 and 2 in text under subheading ‘Case–control studies within cohorts’, and is adapted from Rodrigues et al. [13] and Szklo and Nieto [18]

For each case, one or more control subjects are selected from the overall cohort, and the exposure statuses of the case and control subjects are determined at the time they are sampled. There are three options to sample control subjects: 12 , 13 , 18

As in the aforementioned RCT example, investigators often sample control subjects from the people who have still not developed the disease of interest at the end of follow-up (this is termed ‘cumulative incidence sampling’ or ‘exclusive sampling’), and exposure status at beginning of follow-up is used for these cases and controls. As shown algebraically in many textbooks, in that situation, the odds ratio is exactly the same (on average) as the corresponding odds ratio from the full cohort study, and this will approximate the risk ratio or rate ratio (in the full cohort study) only if the disease is rare (say, <5% of exposed and non-exposed develop the disease). This is the ‘rare disease assumption’, as historically first proposed by Cornfield in 1951. 3 It can be seen from Figure 4 that if the disease is rare, even in the exposed (sub)cohort, the ratio of people with and without exposure among those without disease at the end of the follow-up will remain about the same as at the beginning of the follow-up, which is why the ‘rare disease assumption’ works.

An imaginative solution, first proposed by Kupper et al. , 8 is to sample control subjects from all those in the cohort at the beginning of follow-up instead of at the end (‘case-cohort’ or ‘inclusive sampling’). At the beginning of the follow-up, all persons are still disease free (if they are not, then they would not have been included in the cohort). Then, the control subjects reflect the proportion exposed among the source population at the start of follow-up. Some of the control subjects who are sampled at baseline may become cases during follow-up. This seems strange at first sight, but it is not: if in a cohort study or an RCT, the risk is calculated, one uses all persons developing a disease outcome in the numerator, and divides by the denominator, which consists of all people who were present at start of follow-up, including those who will later turn up in the numerator. As can be seen from Figure 4 , sampling from the persons present at the beginning of the follow-up makes the odds ratio from the case–control study exactly the same (on average) as the risk ratio from the full cohort study. This can be understood most easily if one imagines taking a control sample of 100%, that is, all persons present at the beginning: then the odds ratio in the case–control study will be exactly the same as the risk ratio from the cohort study. Next, if one imagines taking a 50% sample for the control subjects, the odds ratio will remain the same (on average). One complication with this method is the calculation of the standard error of the odds ratio, as some persons are both cases and control subjects; different solutions exist. 31 A further complication is that, just as with the estimation of risks (which this sampling scheme corresponds to), losses to follow-up for other reasons than developing the disease that is studied are not easily taken into account; such losses to follow-up may produce bias if they are substantial and differ between exposed and unexposed.

The third option is to sample control subjects longitudinally throughout the risk period (i.e. not just at the beginning or just at the end). Throughout the follow-up of a cohort, the numbers of both exposed and unexposed persons who are free of disease will decrease, and people may be lost to follow-up for other reasons. Moreover, persons may move between exposure categories. The ‘royal road’ is to sample one or more control subjects at each point in time when a case occurs (‘density sampling’, ‘risk-set sampling’ or ‘concurrent sampling’) and determine the exposure status of cases and control subjects at that point in time. This is depicted in Figure 5 . By this sampling approach, the odds ratio from the case–control study will estimate the rate ratio from the cohort study. This is the equivalent of ‘matching on time’ in dynamic populations. This approach is most correct theoretically, but can only be used for cohorts when one has information about disease status of all persons at regular intervals during follow-up (e.g. when cancer incidence or mortality data are available over time).

Third method of sampling from a cohort: longitudinal sampling, also called concurrent sampling, density sampling or risk-set sampling. Persons start follow-up at inclusion in the cohort (e.g. date of surgery) and are followed until either end point occurs (person becomes a case), or the last calendar day of the study. Persons are indicated by fine lines from start of follow-up onwards. The time axis is follow-up time from inclusion (time 0). The longest period of follow-up is by persons who enter the cohort on the calendar day that the study starts; persons entering later will have shorter follow-up because they will be withdrawn from the study at the last calendar day of the study. Cases and control subjects can be either exposed or non-exposed (not shown here). A person who will become a case can be a control subject earlier, and multiple control subjects, or even a variable number of control subjects, can be drawn for each case. In text, see method 3, under subheading ‘Case–control studies within cohorts’

The first solution corresponds to the original theory proposed by Cornfield, 3 and requires the ‘rare disease assumption’ if the goal is to estimate rate ratio or risk ratios; it was the most frequently used method in case–control studies within cohorts in the past—and that approach was used in almost all case–control studies based on cohorts that were identified in the review by Knol et al. 1 Solution 2 still pertains to cohort thinking, but has an imaginative solution to calculate risk ratios; it is often called a ‘case–cohort’ study, and is particularly useful in studies in which a single control sample can be used for multiple case–control studies of various outcomes. Solution 3 is the more sophisticated development in case–control theory, in which the case–control odds ratio estimates the rate ratio from the cohort population over the follow-up period without the need for any rare disease assumption. 10 , 11 However, it is used relatively rarely. 1

A note about terminology: the term ‘nested case–control studies’ seems to be mostly used to denote case–control studies within cohorts which use the third sampling option. However, it is sometimes loosely used to denote all types of case–control sampling within a cohort.